Researchers from Google DeepMind and University of Alberta Explore Transforming of Language Models into Universal Turing Machines: An In-Depth Study of Autoregressive Decoding and Computational Universality

Researchers are investigating whether large language models (LLMs) can move beyond language tasks and perform computations that mirror traditional computing systems. The focus has shifted towards understanding whether an LLM can be computationally equivalent to a universal Turing machine using only its internal mechanisms. Traditionally, LLMs have been used primarily for natural language processing tasks like text generation, translation, and classification. However, the computational boundaries of these models still need to be fully understood. This study explores whether LLMs can function as universal computers, similar to classical models like Turing machines, without requiring external modifications or memory enhancements.

The primary problem addressed by the researchers is the computational limitations of language models, such as transformer architectures. While these models are known to perform sophisticated pattern recognition and text generation, their ability to support universal computation, meaning they can perform any calculation that a conventional computer can, is still debated. The study seeks to clarify whether a language model can autonomously achieve computational universality using a modified autoregressive decoding mechanism to simulate infinite memory and processing steps. This investigation has significant implications, as it tests the fundamental computational limits of LLMs without relying on external intervention or specialized hardware modifications.

Existing methods that aim to push the computational boundaries of LLMs typically rely on auxiliary tools like external memory systems or controllers that manage and parse outputs. Such approaches extend the models’ functionality but detract from their standalone computational capabilities. For instance, a previous study demonstrated how augmenting LLMs with a regular expression parser could simulate a universal Turing machine. While this showed promise, it did not prove that the LLM was responsible for the computation, as the parser played a significant role in offloading complex tasks. Thus, whether LLMs can independently support universal computation has yet to be solved.

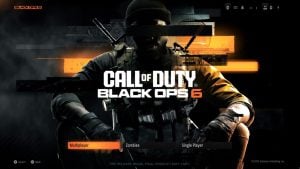

Researchers from Google DeepMind and the University of Alberta introduced a novel method by extending autoregressive decoding to accommodate long input strings. They designed an internal system of rules called a Lag system that simulates memory operations akin to those in classical Turing machines. This system dynamically advances the language model’s context window as new tokens are generated, enabling it to process arbitrarily long sequences. This method effectively transforms the LLM into a computationally universal machine capable of simulating the operations of a universal Turing machine using only its transformations.

The research involved creating a system prompt for an LLM named gemini-1.5-pro-001 that drives it to apply 2,027 production rules under deterministic (greedy) decoding. These rules simulate a Lag system, which has been computationally universal since the 1960s. The researchers built on this classical theory by developing new proof that the Lag system could emulate a universal Turing machine using a language model. This innovative approach reframes the language model’s decoding process into a sequence of discrete computational steps, making it behave as a general-purpose computer.

The proposed method’s performance was evaluated by configuring the language model to simulate a specific universal Turing machine, U15,2, defined by 2,027 production rules over an alphabet of 262 symbols. The study confirmed that gemini-1.5-pro-001, under the proposed framework, could apply these rules correctly to perform any computation within the theoretical framework of a universal Turing machine. This experiment established a clear correspondence between the language model’s operations and classical computational theory, affirming its ability to act as a general-purpose computing machine using only its internal mechanisms.

This study yields several key findings, which are as follows:

First, it establishes that language models can, under certain conditions, simulate any computational task achievable by a traditional computer.

Second, it validates that generalized autoregressive decoding can convert a language model into a universal computing entity when combined with a well-defined production rules system.

Third, the researchers demonstrate the feasibility of implementing complex computational tasks within the constraints of the model’s context window by dynamically managing the memory state during the decoding process.

Finally, it proves that complex computations can be achieved using a single system prompt, offering new perspectives on the design and utilization of LLMs for advanced computational tasks.

Key takeaways from the research:

The study demonstrated that gemini-1.5-pro-001 could simulate a universal Turing machine using 2,027 production rules and an alphabet of 262 symbols.

The model was shown to execute computational tasks autonomously without external modifications or memory enhancements.

The extended autoregressive decoding method allowed the language model to process sequences longer than its context window, proving that it can perform computations over unbounded input sequences.

The framework established that large language models can achieve computational universality, similar to classical models like Turing machines.

The research revealed that a single prompt could drive a model to perform complex computations, transforming the language model into a standalone general-purpose computer.

In conclusion, this research contributes significantly to understanding the intrinsic computational capabilities of large language models. It challenges the conventional views on their limitations by demonstrating that these models can simulate the operations of a universal Turing machine using only their transformations and prompts. It paves the way for exploring new, more complex applications of LLMs in theoretical and practical settings.

Check out the Paper. All credit for this research goes to the researchers of this project. Also, don’t forget to follow us on Twitter and join our Telegram Channel and LinkedIn Group. If you like our work, you will love our newsletter.. Don’t Forget to join our 50k+ ML SubReddit

[Upcoming Event- Oct 17 202] RetrieveX – The GenAI Data Retrieval Conference (Promoted)

Aswin AK is a consulting intern at MarkTechPost. He is pursuing his Dual Degree at the Indian Institute of Technology, Kharagpur. He is passionate about data science and machine learning, bringing a strong academic background and hands-on experience in solving real-life cross-domain challenges.